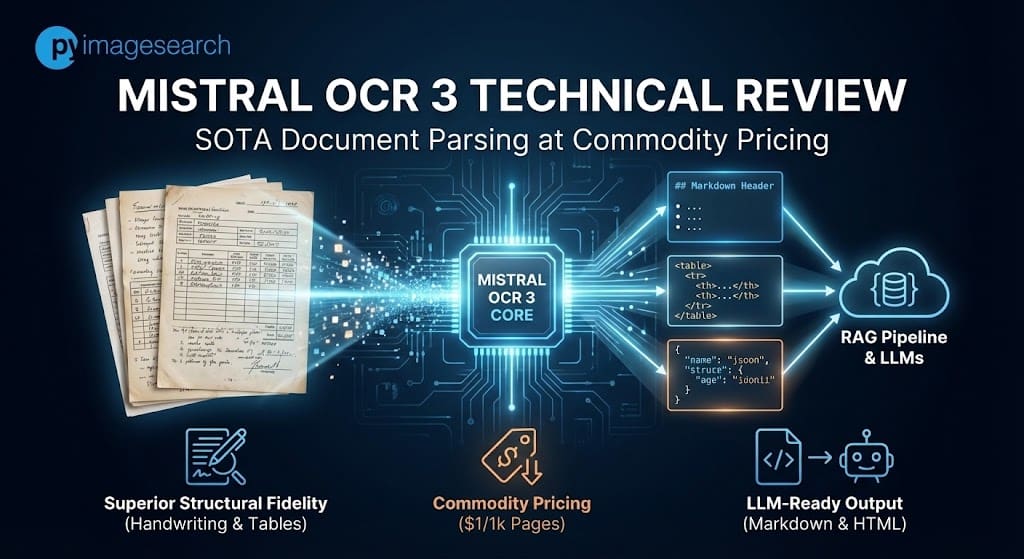

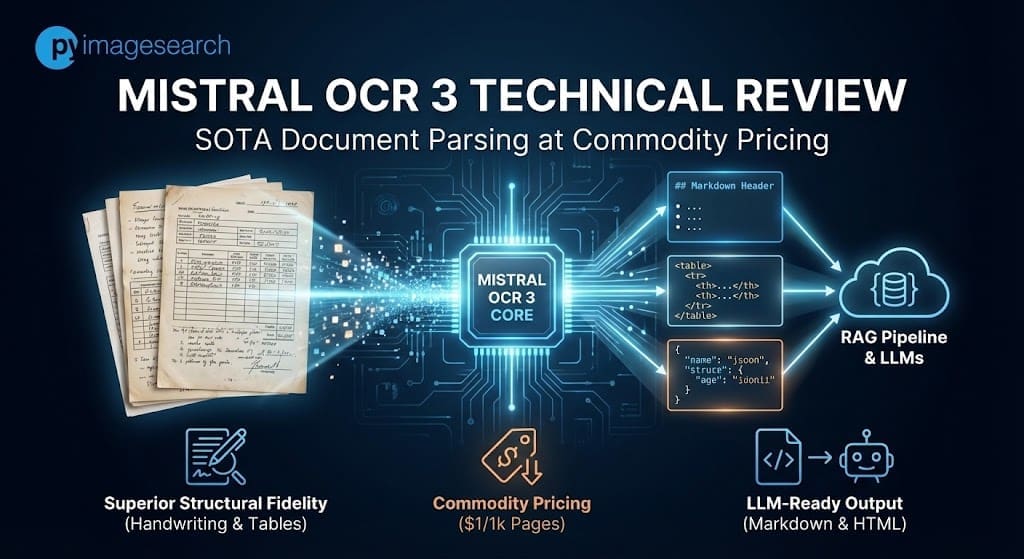

The commoditization of optical character recognition (OCR) has historically been a race to the bottom line, often at the expense of structural fidelity. However, the release of mistral ocr 3 Signals a distinct change in the market. By claiming state-of-the-art accuracy on complex tables and handwriting – underperforming AWS Textract and Google Docs AI by significant margins – Mistral is positioning its proprietary model not only as a cheaper alternative, but as a technically superior parsing engine for RAG (Retrieval-Augmented Generation) pipelines.

This technical analysis analyzes the architecture, benchmark performance against hyperscalers, and the operational realities of deploying Mistral OCR3 in a production environment.

Innovation: Structure-Aware Architecture

Mistral OCR 3 is a proprietary, efficient model specifically optimized for converting document layouts to LLM-ready Markdown and HTML. Unlike typical multimodel LLM, it focuses on structure preservation – particularly through table reconstruction and dense form parsing – available through mistral-ocr-2512 endpoint.

While traditional OCR engines (like Tesseract or early AWS Textract iterations) focus primarily on bounding box coordinates and raw text extraction, Mistral OCR 3 is designed to solve the “structure loss” problem that plagues modern RAG pipelines.

The model has been described as “much smaller than most competing solutions” (1), yet it outperforms larger vision-language models in typical density tasks. Its primary innovation lies in its output modality: instead of returning JSON of coordinates (which requires post-processing for reconstruction), Mistral OCR 3 outputs Markdown enriched with HTML-based table reconstruction (1).

This means that the model has been trained to recognize document semantics – recognizing that there is a grid of numbers.

| metric | mistral ocr 3 | Azure Dock Intelligence | deepseek ocr | Google DocAI |

|---|---|---|---|---|

| handwritten accuracy | 88.9 | 78.2 | 57.2 | 73.9 |

| Historical Scanned Accuracy | 96.7 | 83.7 | 81.1 | 87.1 |

Note: The score of 57.2 for DeepSeek highlights that general-purpose open-weighted models still struggle with covariance variance compared to specialized proprietary endpoints.

Section 2: Structural Integrity (Tables and Forms)

For financial analysis and RAG, table fidelity is binary: it is either usable or not. Mistral OCR 3 shows better detection of merged cells and headers.

| metric | mistral ocr 3 | aws texttract | Azure Dock Intelligence |

|---|---|---|---|

| Accuracy of complex tables | 96.6 | 84.8 | 85.9 |

| form accuracy | 95.9 | 84.5 | 86.2 |

| Multilingual (English) | 98.6 | 93.9 | 93.5 |

Figure 2: Comparative accuracy across document tasks. Note the significant delta in the "Complex Tables" and "Handwritten" categories.

Figure 2: Comparative accuracy across document tasks. Note the significant delta in the "Complex Tables" and "Handwritten" categories.

Balanced criticism: edge cases and failure modes

Despite high overall scores, early adopters report complex multi-column layouts and inconsistency in image format sensitivity. While this is excellent in logical structure, developers should be aware of specific quirks regarding PDF vs. JPEG input handling.

At PyImageSearch, we emphasize that benchmark scores rarely tell the whole story. Analysis of early adopter feedback and community testing reveals specific barriers:

- Format Sensitivity (PDF vs Image): The developers have noted "JPEG vs. PDF" incompatibility. In some instances, converting a PDF page to a high-resolution JPEG before submitting gives better table extraction results than submitting a raw PDF. This suggests that the pre-processing pipeline for PDF rasterization within the API may generate noise.

- Multi-Column Hallucinations: While table extraction is state-of-the-art, "complex multi-column layouts" (such as magazine-style formatting with irregular text flow) remain a challenge. The model sometimes attempts to impose table structure on non-tabular column text.

- "Black Box" Limit: Unlike open-ended options, this is a completely SaaS offering. You cannot fine tune this model to specific proprietary datasets (for example, specific medical forms) as you can with Native Vision Transformers.

- Production Supervision: Despite the 74% win rate on version 2, enterprise users caution that "clean" structure output can sometimes hide OCR hallucination errors. High-fidelity markdown looks perfect to the human eye, even if specific digits are flipped, requiring human-in-the-loop (HITL) verification for financial data.

Pricing and deployment specifications

Mistral OCR 3 aggressively disrupts the market with batch API pricing of $1 per 1,000 pages, undercutting legacy providers' pricing by up to 97%. This is a completely SaaS-based model, which eliminates local VRAM requirements but introduces data privacy considerations for regulated industries.

The economic argument for Mistral OCR 3 is as strong as the technical one. For high-volume archival digitization, the cost difference is non-trivial.

| Speciality | Specification/Cost |

|---|---|

| model id | mistral-ocr-2512 |

| standard api price | $2 per 1,000 pages (1) |

| batch api price | $1 per 1,000 pages (50% off) (1) |

| Hardware Requirements | Nobody (mother-in-law). Accessible via API or documentation AI Playground. |

| output format | Markdown, Structured JSON, HTML (for tables) |

Figure 3: Improvement Rate: Mistral OCR 3 boasts an overall win rate of 74% over its predecessor, V2.

Figure 3: Improvement Rate: Mistral OCR 3 boasts an overall win rate of 74% over its predecessor, V2.

Batch API pricing is especially noteworthy for developers migrating from AWS Textract, where the cost of complex table and form extraction can be significantly higher per page depending on the region and feature flags used.

FAQ: Mistral OCR 3

How does the pricing of Mistral OCR 3 compare to AWS Textract and Google Docs AI? Mistral OCR 3 costs $1 per 1,000 pages via the Batch API (1). In comparison, AWS Textract and Google Docs AI can cost between $1.50 and $15.00 per 1,000 pages, depending on advanced features (such as tables or forms), making Mistral significantly more cost-effective for high-volume processing.

Can Mistral OCR 3 recognize scribbles and messy handwriting? Yes. Benchmarks show that it achieves 88.9% accuracy on handwriting, outperforming Azure (78.2%) and DeepSeek (57.2%). Community testing, such as the "Santa Letter" demo, confirmed its ability to parse dirty letters.

What are the differences between Mistral OCR 3 and Pixtral Large? Mistral OCR 3 is a specialized model optimized for document parsing, table reconstruction, and Markdown output (1). Pixtral Large is a general purpose multimodal LLM. OCR 3 is smaller, faster, and cheaper for dedicated document tasks.

How to use Mistral OCR 3 Batch API for low cost? Developers can specify a batch processing endpoint when making an API request. It processes documents asynchronously (ideal for archival backlogs) and applies a 50% discount, bringing costs down to $1/1k pages (1).

Is the Mistral OCR 3 available as an open-weight model? No, currently, Mistral OCR 3 is a proprietary model available only through the Mistral API and Document AI Playground.

Citation

(1) Mistral AI, "Introducing Mistral OCR 3",

Previous article:

Building Your First Streamlit App: Uploads, Charts, and Filters (Part 1)

Next article:

Mistral OCR 3 Technical Review: SOTA Document Parsing on Commodity Pricing