Image by author

, Introduction

Building machine learning models manually involves a long series of decisions. It involves several steps, such as cleaning the data, choosing the right algorithm, and tuning the hyperparameters to get good results. This trial-and-error process often takes hours or even days. However, there is a way to solve this problem by using Tree-Based Pipeline Optimization ToolOr TPOT.

TPOT is a Python library that uses genetic algorithms to automatically discover the best machine learning pipeline. It treats pipelines like populations in nature: it tries many combinations, evaluates their performance, and “evolves” the best one over several generations. This automation allows you to focus on solving your problem while TPOT handles the technical details of model selection and optimization.

, How does TPOT work?

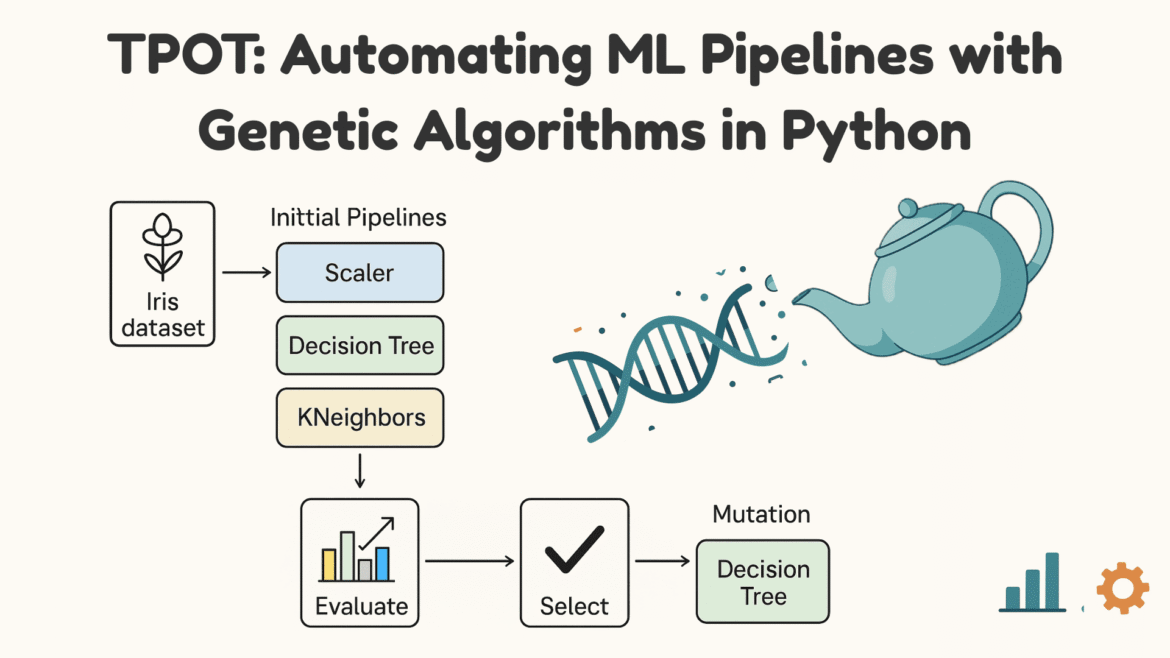

TPOT uses genetic programming (GP). It is a type of evolutionary algorithm inspired by natural selection in biology. Instead of developing organisms, GPs develop computer programs or workflows to solve a problem. In the context of TPOT, the “programs” being developed are machine learning pipelines.

TPOT works in four main stages:

- Generate Pipeline: It starts with a random population of machine learning pipelines, including preprocessing methods and models.

- Evaluate Fitness: Each pipeline is trained and evaluated on the data to measure performance.

- Selection and Development: The best performing pipelines are selected to “reintroduce” and create new pipelines through crossover and mutation.

- Iteration over generations: This process is repeated for several generations until TPOT identifies the best performing pipeline.

The process is shown in the figure below:

Next, we’ll see how to set up and use TPOT in Python.

, 1. Installing TPOT

To install TPOT, run the following command:

, 2. Importing the Library

Import required libraries:

from tpot import TPOTClassifier

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score, 3. Loading and Partitioning Data

For this example we will use the popular Iris dataset:

iris = load_iris()

X, y = iris.data, iris.target

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) load_iris() Provides function features X and label y, train_test_split The function maintains a test set so that you can measure final performance on unseen data. This creates an environment where pipelines will be evaluated. All pipelines are trained on the training part and validated internally.

Comment: TPOT uses internal cross-validation during fitness assessment.

, 4. Starting TPOT

Start TPOT like this:

tpot = TPOTClassifier(

generations=5,

population_size=20,

random_state=42

)You can control how long and how widely TPOT searches for a good pipeline. For example:

- generations=5 This means that TPOT will undergo five development cycles. In each cycle, it creates a new set of candidate pipelines based on the previous generation.

- population_size=20 This means that there are 20 candidate pipelines in each generation.

- random_position This ensures that the results are reproducible.

, 5. Training the model

Train the model by running this command:

tpot.fit(X_train, y_train)when you run tpot.fit(X_train, y_train)TPOT starts searching for the best pipeline. It creates a set of candidate pipelines, trains each one to see how well it performs (usually using cross-validation), and retains the top performers. Then, it mixes and changes them slightly to create a new group. This cycle repeats for the number of generations you set. TPOT always remembers which pipeline has performed best so far.

Output:

, 6. Evaluation of accuracy

This is your final check on how the selected pipeline behaves on unseen data. You can calculate the accuracy as follows:

y_pred = tpot.fitted_pipeline_.predict(X_test)

acc = accuracy_score(y_test, y_pred)

print("Accuracy:", acc)Output:

, 7. Exporting the best pipeline

You can export the pipeline to a file for later use. Note that we must import dump From joblib First:

from joblib import dump

dump(tpot.fitted_pipeline_, "best_pipeline.pkl")

print("Pipeline saved as best_pipeline.pkl")joblib.dump() Stores the entire fitted model as best_pipeline.pkl,

Output:

Pipeline saved as best_pipeline.pklYou can load it later like this:

from joblib import load

model = load("best_pipeline.pkl")

predictions = model.predict(X_test)This makes your models reusable and easy to deploy.

, wrapping up

In this article, we looked at how machine learning pipelines can be automated using genetic programming, and we also saw a practical example of implementing TPOT in Python. For further exploration, please consult Documentation,

Kanwal Mehreen He is a machine learning engineer and a technical writer with a deep passion for the intersection of AI with data science and medicine. He co-authored the eBook “Maximizing Productivity with ChatGPT”. As a Google Generation Scholar 2022 for APAC, she is an advocate for diversity and academic excellence. She has also been recognized as a Teradata Diversity in Tech Scholar, a Mitex GlobalLink Research Scholar, and a Harvard VCode Scholar. Kanwal is a strong advocate for change, having founded FEMCodes to empower women in STEM fields.